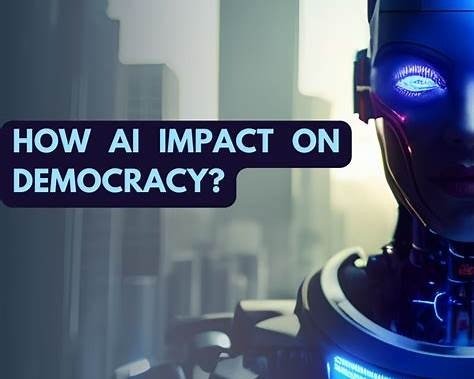

As artificial intelligence increasingly influences how laws are drafted and policies are shaped, a fundamental democratic question emerges: what happens when algorithms help write the rules of society? This in-depth analysis explores how AI could transform lawmaking, the risks to accountability and representation, and the safeguards needed to ensure democracy remains human-centered in an automated age.

Introduction: When Technology Enters the Heart of Democracy

For centuries, laws have been shaped by people—elected officials, legal scholars, activists, and citizens whose values and experiences influenced public debate. Democracy, at its core, is not simply a system of governance; it is a reflection of collective human judgment.

Now, artificial intelligence is entering that space.

Governments around the world are beginning to use AI systems to analyze legislation, predict policy outcomes, identify legal inconsistencies, and even draft early versions of laws. What once took teams of lawyers months can now happen in minutes. To many policymakers, this feels like progress.

But for citizens, it raises an uncomfortable question:

If AI starts writing laws, what happens to democratic accountability, representation, and trust?

This article examines that question from every angle—technological, political, ethical, and human—using real-world examples and practical insights to help readers understand what is at stake.

What Does “AI Writing Laws” Actually Mean?

Despite alarming headlines, AI is not currently sitting in parliament or congress passing legislation. Instead, AI plays a supporting role in the lawmaking process.

In practice, AI is being used to:

- Draft preliminary versions of bills based on policy goals

- Analyze massive legal databases for contradictions or outdated language

- Model the economic or social impact of proposed laws

- Translate complex legal language into simpler summaries

- Assist regulators in updating rules faster than traditional methods allow

These tools are often framed as “assistive,” not authoritative. However, the more governments rely on them, the more influence they quietly gain.

Over time, recommendations can start to feel like decisions.

Why Governments Are Turning to AI in Lawmaking

The growing interest in AI-driven governance is not accidental. Modern democracies are under intense pressure.

Legislators face:

- Increasingly complex societies and globalized economies

- Rapid technological change that outpaces existing laws

- Mountains of outdated or overlapping regulations

- Public frustration with slow political processes

- Limited staff and resources compared to policy demands

AI offers a tempting solution. It promises speed, consistency, and data-backed insights. From a purely operational standpoint, it makes sense.

But democracy is not only about efficiency. It is also about deliberation, disagreement, and values that cannot be reduced to numbers.

Can Algorithms Understand Democratic Values?

This is where optimism meets reality.

AI systems do not understand fairness, justice, or freedom the way humans do. They recognize patterns in data. They optimize toward predefined goals. They reflect the priorities of those who design them.

If an AI system is trained on decades of past legislation, it will likely:

- Reinforce existing power structures

- Preserve historical inequalities

- Favor measurable outcomes over moral nuance

- Miss emerging values that lack historical precedent

Many of the most important democratic advances—civil rights, labor protections, gender equality—were not “efficient” outcomes. They were moral decisions driven by activism and public pressure.

An algorithm trained on historical data would not have invented those changes on its own.

The Hidden Risk of “Objective” Lawmaking

One of the most dangerous assumptions about AI is that it is neutral.

In reality, AI reflects:

- The data it is trained on

- The goals it is optimized to achieve

- The assumptions of its designers

When lawmakers begin treating AI recommendations as “objective” or “data-driven truth,” democratic debate can quietly disappear.

If a system says a policy is “optimal,” who challenges it?

If a model predicts negative outcomes, who decides whether those outcomes are acceptable?

Democracy depends on disagreement. Algorithms tend to smooth it away.

Accountability: Who Is Responsible When AI Shapes the Law?

A core democratic principle is accountability. Voters know who to blame—or reward—when policies succeed or fail.

AI complicates that relationship.

If a law drafted with AI support causes harm, responsibility becomes diffuse:

- Was it the lawmaker who approved it?

- The agency that deployed the AI tool?

- The private company that built the model?

- The data sources that influenced its output?

AI systems cannot be voted out of office. They cannot testify. They cannot apologize.

This creates an accountability gap—one that democratic systems are not yet equipped to handle.

Real-World Examples That Reveal the Risks

These concerns are not theoretical. They are already playing out.

In criminal justice systems, algorithmic risk assessment tools have been used to guide bail and sentencing decisions. Later investigations revealed that some of these systems disproportionately labeled minority defendants as “high risk,” reinforcing systemic bias.

In social welfare programs, automated decision systems flagged thousands of legitimate recipients as fraudulent, leading to financial devastation before errors were corrected.

In each case, the problem was not malicious intent—but misplaced trust in automated logic.

Does AI Shift Power Away from Citizens?

Democracy relies on public understanding and participation. When decision-making becomes too technical, it moves out of public view.

If AI increasingly shapes laws:

- Policy debates may shift from public forums to technical environments

- Citizens may struggle to understand how decisions were made

- Power may concentrate among technologists and system designers

- Political choices may feel pre-determined rather than debated

Over time, this can hollow out democratic engagement while preserving its outward appearance.

Elections still happen—but real influence quietly shifts elsewhere.

Can AI Strengthen Democracy Instead of Weakening It?

Yes—but only if used carefully.

When deployed responsibly, AI can support democratic values rather than undermine them. It can help lawmakers understand complex issues, identify unintended consequences, and communicate more clearly with the public.

Used well, AI can:

- Make laws easier for citizens to understand

- Highlight policy trade-offs more transparently

- Reduce bureaucratic inefficiency without erasing human judgment

- Expand access to civic information

The key is ensuring that AI remains a tool, not an authority.

Essential Safeguards for Democratic AI

Experts increasingly agree that AI in governance must follow strict principles.

These include:

- Mandatory human oversight at every stage

- Transparency about when and how AI is used

- Plain-language explanations of AI-assisted decisions

- Independent audits for bias and fairness

- Clear legal responsibility for outcomes

- Public access to AI-generated legislative drafts

Without these safeguards, efficiency can quietly replace legitimacy.

Will AI Ever Replace Lawmakers?

In any genuine democracy, the answer should be no.

The greater danger is not replacement, but deference. When humans stop questioning recommendations because they come from a machine, democratic judgment erodes.

AI can process information.

Only humans can decide values.

The Bigger Question Democracy Must Answer

At its heart, this debate is not about technology.

It is about who decides:

- What is fair

- What risks are acceptable

- Whose voices matter

- What kind of society we want to live in

Those decisions cannot be outsourced without losing something essential.

The future of democracy does not depend on how advanced AI becomes.

It depends on whether humans remain accountable for the choices it informs.

Frequently Asked Questions (10)

1. Can AI legally write laws in the United States?

No. AI can assist in drafting and analysis, but elected officials must approve laws.

2. Are governments already using AI in lawmaking?

Yes. Many governments use AI tools to analyze legislation and draft regulatory language.

3. Does AI reduce political bias in laws?

Not automatically. AI often reflects existing biases in its training data.

4. Who controls AI tools used by lawmakers?

Typically government agencies or private technology companies.

5. Can citizens challenge AI-influenced laws?

Yes, but legal challenges target the law itself, not the AI system behind it.

6. Could AI improve democratic participation?

Yes, if used to simplify legal language and increase transparency.

7. Is AI in governance unavoidable?

Its use is likely to grow, but how it is governed remains a policy choice.

8. What happens if AI recommendations conflict with public opinion?

In a democracy, elected officials should override AI recommendations.

9. Are there laws regulating AI in government today?

Some frameworks exist, but comprehensive regulation is still evolving.

10. What is the biggest risk of AI-assisted lawmaking?

Loss of accountability and erosion of democratic trust.

Final Thought

AI can help governments govern—but it must never replace the human responsibility at the heart of democracy. Laws are not just systems to optimize. They are promises we make to one another.

As technology advances, democracy’s challenge is simple—but not easy:

Use smarter tools without surrendering human judgment.